What the Anthropic-Pentagon showdown reveals about AI, power, and the rules we haven’t written yet.

On Friday, February 27, President Trump directed every federal agency to stop using Anthropic's technology. Hours later, Defense Secretary Pete Hegseth designated the company a supply-chain risk, a penalty previously reserved for Chinese telecom firms suspected of espionage. By nightfall, OpenAI had signed its own deal with the Pentagon, claiming it had secured the exact same safety guardrails that Anthropic had demanded and been punished for requesting.

Then Trump authorized strikes on Iran — and, according to the Wall Street Journal, U.S. Central Command planned and executed them with the help of Anthropic's Claude, the very tool the president had just banned. Commands around the world, including CENTCOM, were using Claude for intelligence assessments, target identification, and simulating battle scenarios even as the ban was announced. The technology was too deeply embedded to switch off.

That detail, more than any argument about contracts or principles, reveals what this episode is actually about. Not a dispute between two parties, but the inevitable collision that occurs when transformative technology outpaces the institutions meant to govern it.

The Anthropic-Pentagon showdown is not ultimately about Dario Amodei's negotiating tactics, Pete Hegseth's temperament, or Sam Altman's opportunism. It's about a governance gap at the center of the most consequential technology of our era, and what fills that gap when the institutions meant to govern it haven't shown up.

The Gap

Rules governing military AI do exist. The Fourth Amendment constrains government searches. FISA establishes a court to authorize surveillance. Intelligence oversight committees provide congressional review. DoD Directive 3000.09 sets guidelines for autonomous weapons. Executive Order 12333 governs intelligence collection. Inspector Generals investigate misuse.

The problem is not absence; it's that this architecture was built for a different technological era, and its seams are now load-bearing walls.

Consider the commercially available data loophole. Under current law, intelligence agencies can purchase bulk location data, browsing history, and financial records from brokers without a warrant, then use AI to aggregate it into comprehensive individual profiles at any scale. The Defense Intelligence Agency has openly acknowledged doing this, and its own internal memo stated that the Supreme Court's Carpenter decision (requiring warrants for cellular location data) does not apply to commercially purchased data. When OpenAI's head of national security partnerships assured a questioner that the Pentagon has "no legal authority" for mass surveillance, she was making a technically defensible but substantively incomplete claim. The legal framework permits activities that are functionally indistinguishable from mass surveillance, it just doesn't call them that.

Or consider autonomous weapons. DoD Directive 3000.09, the primary governance document, doesn't prohibit fully autonomous weapons. It establishes guidelines for their design and testing, and it's a policy directive, not a statute, meaning any administration can revise or rescind it. There is no law that prevents the Pentagon from deploying weapons systems that select and engage targets without human intervention.

These aren't hypothetical gaps. They're the specific issues that led to the breakdown of the Anthropic negotiation.

Two Wrong Tools

Former Air Force Secretary Frank Kendall, who ran a major military branch under Biden, wrote an op-ed last week that cut through the noise. On both sides, he argued, the wrong instruments are being used.

Anthropic is wrong to try to solve a governance problem through contract language. A private company dictating the terms of military operations creates a troubling precedent. The government can't negotiate bespoke ethical frameworks with every supplier. If Raytheon sold the Pentagon a missile and then demanded veto power over which targets it could strike, the absurdity would be self-evident. The fact that AI is software rather than hardware doesn't change the underlying principle: once a product is sold, the buyer, particularly a democratic government, should determine how it's used within the law.

And there's a harder question the principle-over-pragmatism framing obscures: how enforceable were Anthropic's terms, really? Free-standing contractual prohibitions against "mass surveillance" and "autonomous weapons" may sound robust, but these concepts resist crisp definition. What counts as "mass"? What constitutes "autonomy"? A drone that selects targets independently is clearly autonomous. A system that generates a prioritized target list for human review may not be. Contract terms that depend on ambiguous definitions can create operational paralysis without actually preventing the outcomes they're designed to avoid.

But the Pentagon is equally wrong to fill the governance gap with executive coercion. Designating an American company as a supply-chain risk for declining to accept contract changes isn't governance; it's extortion. Section 3252, the statute underlying the designation, was designed to protect against foreign adversary infiltration of the defense supply chain. Its operative verbs are "sabotage," "subvert," and "maliciously introduce unwanted function." Its legislative history points exclusively to foreign threats. A former Trump administration AI policy advisor called Hegseth's actions "almost surely illegal" and "attempted corporate murder."

Kendall identified the logical contradiction at the heart of the Pentagon's position: it simultaneously argued that Claude was so essential to national security that the Defense Production Act should be invoked to compel its availability, and so dangerous to national security that no defense contractor should be permitted any commercial relationship with Anthropic. As Kendall observed: "Both cannot be true."

The OpenAI Arbitrage

This governance gap made OpenAI's deal possible and unstable almost immediately.

OpenAI's initial approach was to reference existing law as the safeguard rather than creating new contractual prohibitions. The contract stated that AI systems won't be used for "unconstrained monitoring of U.S. persons' private information" as consistent with a list of existing authorities — the Fourth Amendment, the National Security Act of 1947, FISA, Executive Order 12333, and applicable DoD directives. As George Washington University law professor Jessica Tillipman noted, the published excerpt "does not give OpenAI an Anthropic-style, free-standing right to prohibit otherwise-lawful government use." It simply stated the Pentagon can't break current law — a tautology, not a safeguard.

The criticism was immediate. By Monday night, three days after the deal was signed, OpenAI amended the contract to add explicit, free-standing prohibitions against domestic surveillance of U.S. persons and against using commercially acquired personal data for tracking. These are, notably, the exact protections Anthropic had demanded and been blacklisted for requesting. Altman acknowledged the original deal had been rushed: "We shouldn't have rushed to get this out on Friday. The issues are super complex … I think it just looked opportunistic and sloppy."

The amendment is substantive. It directly addresses the commercially available data loophole and goes beyond mere reference to existing law. Combined with OpenAI's architectural protections, cloud-only deployment, forward-deployed engineers with security clearances, and retention of its safety stack, the revised deal creates meaningful barriers to the most concerning use cases.

But consider what actually happened: the most consequential AI-military contract in history was signed on a Friday, criticized over the weekend, and rewritten by Monday night. The rules governing the use of AI in national security were drafted and redrafted over a 72-hour PR cycle. That's not governance. That's improvisation under pressure — and it proves the point that both companies have been trying to make: bilateral negotiations between tech CEOs and defense secretaries are no substitute for durable law.

The Deterrence Problem

Most analysis of this episode, including the first draft of this piece, underweights the Pentagon's strategic rationale.

The Defense Department isn't acting out of pure institutional arrogance. It operates on a genuine belief, shared across administrations, that AI is a decisive military technology, and that the window to establish advantage is narrow. China is investing massively in military AI, with fewer institutional constraints on deployment. Russia has signaled its willingness to develop autonomous weapons systems. The U.S. intelligence community has assessed that AI will be central to future warfare, potentially as transformative as nuclear weapons were in the mid-20th century.

In this frame, governance constraints that slow military AI development aren't just bureaucratic friction; they are strategic vulnerability. If the U.S. constrains its own military AI development while adversaries don't, democratic governance becomes a competitive disadvantage. This is a real tension, not a manufactured one, and it's what makes AI governance fundamentally harder than regulating automobiles or appliances.

The counterargument, and it's a strong one, is that the history of arms control shows that governance and strategic advantage are not always opposed. Nuclear deterrence was built alongside arms-control frameworks that constrained the most dangerous applications while preserving strategic capability. The question is whether AI governance can achieve a similar balance: constraining the uses that threaten democratic values (mass domestic surveillance, weapons systems that remove human judgment from lethal decisions) without constraining the uses that provide legitimate strategic advantage (intelligence analysis, logistics, war-gaming, targeting support with human oversight).

That balance is achievable. But finding it requires the kind of deliberate, technically informed policymaking that only Congress can provide — and that no contract negotiation or executive directive can substitute for.

The Structural Shift

Zoom out from the personalities and look at what this episode reveals about the AI industry’s structural relationship with government power.

The traditional defense-industrial relationship is built on dependency. Lockheed Martin, Raytheon, and Northrop Grumman derive a large share of their revenue from military contracts, giving the Pentagon immense leverage. If the government says jump, defense contractors negotiate the height.

AI companies operate under fundamentally different economics. Anthropic’s $200 million Pentagon contract, while meaningful, represented roughly 2% of its reported annual revenue run rate. The consumer and enterprise AI markets are vast, fast-growing, and global. Government business is a complement, not a foundation. This structural independence enabled Anthropic to say no — and it’s what made the Pentagon’s coercive response feel disproportionate.

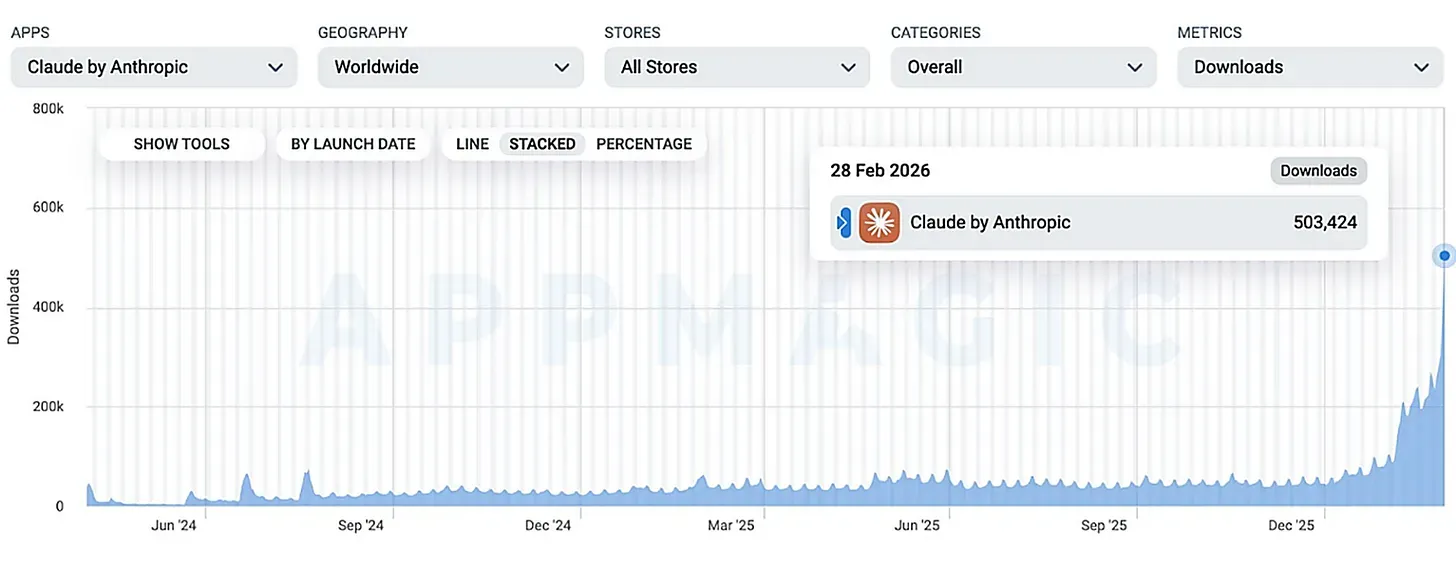

The consumer response illustrated the dynamic. In the 72 hours after Anthropic was blacklisted, Claude surged to No. 1 on Apple’s App Store, overtaking ChatGPT. Daily sign-ups broke records. A “QuitGPT” movement spread across social media. Whether this translates into durable revenue or just a transient spike in attention remains to be seen. But the signal is real: for a significant segment of AI consumers, a company’s values are a purchasing criterion, and government confrontation can increase commercial viability rather than diminish it.

For the Pentagon, this should be a sober data point. The tools of coercion that work on companies dependent on government contracts may have the opposite effect on companies whose primary market is elsewhere. Punishing AI companies for asserting values that resonate with consumers and engineers risks driving talent and market share toward those very companies — and away from the vendors the Pentagon would prefer.

What Actually Needs to Happen

Every serious voice in this debate, Anthropic, OpenAI, the Pentagon, legal scholars, former defense secretaries, bipartisan congressional leaders, converges on the same conclusion: Congress needs to legislate.

The specifics are remarkably clear. Congress needs to address the commercially available data loophole that allows intelligence agencies to purchase bulk personal data without warrants. It needs to establish statutory requirements for human oversight of AI in lethal decision-making, replacing revocable policy directives with durable law. And it needs to define boundary conditions for government coercion of technology companies, ensuring that national security tools designed for foreign adversaries cannot be weaponized against domestic companies in contract disputes.

This is, admittedly, an optimistic prescription. Congress legislates slowly. AI evolves quarterly. The classification of military use cases makes public deliberation difficult. Lawmakers lack technical fluency. And the political incentives, national security is risky to constrain, and AI companies are polarizing, cutting against action.

But the alternative is demonstrably inadequate. The Iran strikes showed what happens when governance relies on executive directives alone: the president banned a technology on a Friday afternoon, and the military used it in combat that same evening, because the systems were too deeply embedded to decouple on command. The OpenAI amendment showed what happens when governance relies on contracts: the most important AI-military deal in history was rewritten over a weekend because of public criticism, not legal process. If a presidential order can't be implemented in real time and a landmark contract can't survive 72 hours of scrutiny, these instruments aren't capable of governing transformative technology. Without legislation, AI governance will continue to be improvised through bilateral negotiations between defense secretaries and startup CEOs, with outcomes determined by political leverage rather than democratic deliberation. Each confrontation will set ad hoc precedents. Each company will make different compromises. And the rules governing how the world's most powerful military uses the most powerful technology in human history will remain a patchwork of executive orders, agency directives, and procurement disputes, designed for a world that no longer exists.

The Anthropic-Pentagon confrontation will be remembered not for who won or lost the immediate battle, but for what it exposed: a democracy deploying transformative technology without democratically-established rules for its use. A president banned a company's AI on a Friday afternoon and bombed a country with it that same evening. That's not a policy dispute. It's a governance gap, and no contract, no executive order, and no App Store ranking can close it. Only Congress can. The question is whether it will.