There is a pattern that repeats across every general-purpose technology in history. The capability arrives. Adoption spreads. And then, nothing. Productivity statistics refuse to move. Economists scratch their heads. Pundits declare the technology overhyped. Years later, the gains finally appear, and everyone pretends they saw it coming.

We are living through that pattern again. In a survey of nearly 6,000 executives across the United States, the United Kingdom, Germany, and Australia published in early 2026, 89% reported no measurable impact from AI on their labor productivity over the past three years. PwC found that 56% of CEOs saw neither increased revenue nor decreased costs. ManpowerGroup's Global Talent Barometer showed that workers' regular use of AI increased by 13% in 2025, but their confidence in the technology's utility fell by 18%. People are using AI more and believing in it less.

And yet. Erik Brynjolfsson's analysis of revised Bureau of Labor Statistics data suggests U.S. productivity grew roughly 2.7% in 2025, nearly double the prior decade's average. A small but growing cohort of firms is automating end-to-end workstreams with AI agents, compressing weeks of work into hours. At the 95th percentile, something is clearly working. The question is why it isn't working everywhere.

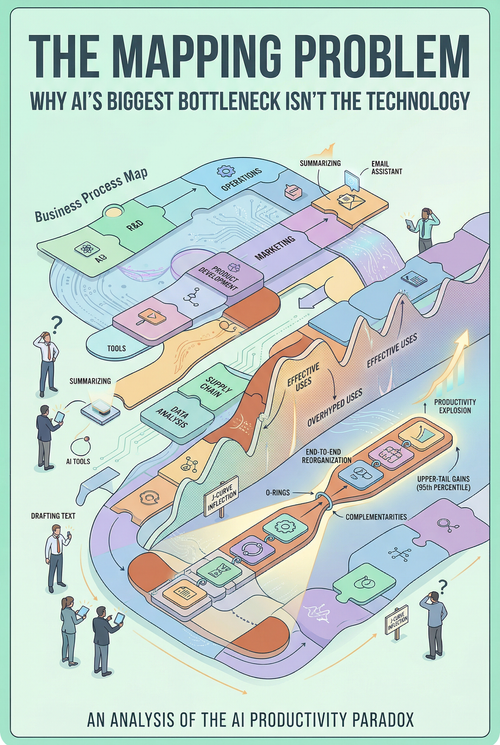

A new field experiment from INSEAD and Harvard Business School offers the most compelling answer I've seen: a central and previously underappreciated constraint on AI's firm-level value is not access to the technology, not skill in using it, and not even willingness to adopt it. It is a cognitive search problem, the authors call it the mapping problem, the challenge of identifying where and how AI creates value within a firm's production process.

This distinction matters enormously. It changes how firms should invest in AI, how policymakers should support adoption, and how we should interpret the current productivity data. Let me explain why.

The Experiment

Hyunjin Kim, Dahyeon Kim, and Rembrand Koning designed their field experiment as part of the AI Founder Sprint, a three-month global startup accelerator at INSEAD. They enrolled 515 high-growth startups from across the world, a third from the Middle East and Africa, a quarter from Asia-Pacific, a fifth from Europe, and a fifth from the Americas. The median firm had four people and was founded in 2024.

Every firm in the accelerator received the same baseline support: roughly $25,000 in API credits and partner tools from Google Cloud, OpenAI, NVIDIA, and Manus AI. Weekly three-hour hands-on technical training covering rapid prototyping, context engineering, RAG, agentic workflows, and vibe-coding. Demo days, pitch practice, and exposure to leading venture capitalists. Weekly progress reports. Peer learning groups. Office hours with mentors.

The only difference was what firms learned in their weekly workshop sessions. Starting in the third week, treated firms received case studies showing how other companies had reorganized their production processes around AI. Control firms received standard entrepreneurship case studies — building customer profiles, designing validation tests, and lean startup methodology.

This design feature makes the experiment exceptional. Both groups had the same tools. The same credits. The same technical training. The same mentors. The only variable was how they were taught to think about where AI fits in their business. The experiment doesn't test whether AI works. It tests whether knowing where to put it matters.

What Happened

Treated firms identified 2.7 additional AI use cases during the program, a 44% increase over control firms. But the number alone understates what changed. The new use cases spanned 0.84 additional distinct functional categories, indicating that firms weren't just using AI more intensively in the same places. They were expanding into new areas of their business: product development, strategic decision-making, and business operations. The gains were largest in precisely the domains that require genuine reorganization, rethinking how work is structured, and smallest in domains like writing and research, where AI slots into existing workflows without changing anything fundamental.

This shift in AI use produced real performance differences. Treated firms completed 12% more tasks, driven entirely by internal activities: building, prototyping, and developing. They were 18% more likely to acquire paying customers. They generated 1.9 times more revenue. These are not lab effects on stylized tasks. These are actual business outcomes measured across a diverse global sample.

But the most revealing finding is in the distribution. Revenue gains through most of the distribution were small. They exploded in the upper tail, at the 90th and 95th percentiles, treated firms dramatically outperformed control firms. This is not a story about AI modestly improving the average venture. It is a story about AI expanding the ceiling of what the best ventures can achieve.

And perhaps most striking: treated firms asked for $224,000 less in external capital — a 39.5% reduction — with no change in labor demand. They believed they could do more with less. The economics of building a company were changing underneath them.

Why the Mapping Problem Is Hard

The reason firms struggle to discover where AI creates value isn't laziness or ignorance. It is structural. Three features of AI conspire to make the search problem unusually difficult.

First, AI's capabilities are uneven and hard to predict. Even closely related tasks can differ sharply in how well AI performs them. Experts, including AI researchers, systematically misjudge where AI will help and where it won't. The research by Dell'Acqua and colleagues at Harvard Business School showed that consultants using GPT-4 on tasks inside the technology's frontier improved performance by 40%, while those using it on tasks outside the frontier performed worse than they would have without AI. The frontier is jagged, and nobody has a reliable map.

Second, the search space is vast. Within any given firm, AI could potentially be applied to dozens of activities. Strategy research has long shown that managers search locally, in the neighborhood of what they already know. Without frameworks or analogies that broaden the space they consider, firms default to the most obvious applications. A chatbot for customer support. An LLM to draft emails. A coding assistant to write boilerplate. These are the low-hanging fruit, often the lowest-value applications.

Third, complementarities compound the difficulty. This is the deepest layer, and the one most readers will miss. In many production processes, the value of improving one activity depends on whether adjacent activities adjust as well. When tasks are quality complements, when a single weak link can spoil the product, delay the workflow, or undermine effort elsewhere, partial automation preserves bottlenecks rather than relieving them.

The Gans and Goldfarb "O-Ring Automation" paper, published in January 2026, formalizes this precisely. In their model, automating one task changes the return to automating others. A firm that applies AI to a single step may see negligible gains because the bottleneck simply moves. But a firm that maps AI across multiple complementary steps can unlock multiplicative improvements, gains that compound across the production chain.

This is exactly what the experimental data show. The case studies presented to treated firms illustrated this logic concretely. In one case, the AI-native presentations company Gamma compressed its product development chain from a sequence of specialists working in quarterly cycles to a single product manager continuously shipping features, with AI detecting usage patterns and generating product variants directly. In another, the accounts receivable startup FazeShift replaced the human "glue" between software systems, the clerks who manually bridged between Excel, QuickBooks, bank portals, and email, with a fully automated end-to-end sequence. Partial automation would have preserved the bottleneck. Only automating the full chain converted what was a labor-intensive professional service into a scalable software product.

It is worth noting that the paper does not directly measure task interdependence or identify specific bottleneck removal; the complementarities mechanism is inferred from the pattern of results, particularly the upper-tail concentration of gains, rather than causally pinned down. But the pattern is striking, and the interpretation is consistent with the theoretical logic.

The treated firms didn't just learn to use AI tools. They learned to see their production processes as chains of interconnected activities and to ask: where in this chain does AI create the most leverage, and what else has to change to make that leverage real?

What This Means for the Productivity Paradox

The current state of AI productivity evidence is a paradox only if you expect technology to automatically translate into output. It isn't a paradox at all once you understand the mapping problem.

Here is what the data actually shows in early 2026: AI adoption is widespread, roughly 43% of U.S. workers and 32% of European workers report using AI at work. Yet only 7% of firms use AI in production. The vast majority of adopters are using AI for narrow, individual-level tasks: drafting text, summarizing documents, and generating images. These are legitimate uses, but they don't change how the firm produces its output. They don't remove bottlenecks. They don't reorganize chains of complementary activities. They improve a single task in isolation, and the gains dissipate before reaching the firm's bottom line.

Meanwhile, a small cohort of firms — Brynjolfsson's "power users" — are doing something fundamentally different. They are automating end-to-end workstreams. They are rethinking which tasks even exist. They are using AI not to do existing work faster but to change the structure of production. These firms are driving the macro productivity signal and are disproportionately concentrated in the upper tail.

The Kim, Kim, and Koning paper suggests that the difference between these two groups is not primarily about resources, technical skill, or even ambition. It is about whether they have solved the mapping problem, whether they have discovered where in their production process AI creates value, and how to reorganize the rest of the firm to capture it.

This interpretation is consistent with the MIT Sloan research using U.S. Census data, which found that AI adoption tends to reduce productivity in the short term before producing gains over time, the J-curve pattern. But the Kim paper adds a crucial nuance: firms aren't just waiting for the J-curve to turn. Many of them are on the wrong curve entirely. They have mapped AI to low-value applications and will never see compound gains, no matter how long they wait. The J-curve only inflects if the firm has found the right configuration.

The Absence of Heterogeneity

One of the paper's most subtle findings is what it doesn't find. The treatment effects do not vary significantly by baseline venture performance or by the founder's technical background. High-traction firms and low-traction firms benefit similarly. Engineers and non-engineers benefit similarly.

This is worth pausing on. If the constraint were technical, if the treatment primarily taught founders how to prompt or code with AI, technically trained founders should benefit less, since they already possess those capabilities. If the constraint were about general managerial or entrepreneurial ability, higher-performing firms should benefit more. That neither dimension predicts treatment response is consistent with the constraint being something other than treatment response: how broadly founders search across their production processes for deployment opportunities.

This is a cognitive constraint, not a capability constraint. And it is precisely the kind of constraint that frameworks, case studies, and structured exploration can alleviate, regardless of background or starting position.

The contrast with the Otis et al. experiment with Kenyan entrepreneurs is instructive. In that study, access to a GPT-4-powered AI mentor via WhatsApp produced no average treatment effect, but high-performing entrepreneurs benefited while low-performing entrepreneurs actually did worse. The crucial difference: Otis varied access to AI. Kim varied information about how to reorganize around AI. Same technology, fundamentally different intervention, fundamentally different results. The mapping problem is not about having the tool. It is about knowing where to point it.

The Capital Demand Result

Most commentary on this paper will focus on the revenue and customer acquisition numbers. I think the most profound finding is the reduction in capital demand.

Treated firms reduced their anticipated capital ask by 39.5%, over $220,000, while growing faster and demanding no additional labor. When you examine the quantile distribution, the largest reductions in capital ask come from firms above the 60th percentile. These aren't failing firms that no longer need money because they've given up. These are growing firms that believe they can achieve their goals with substantially less external funding.

The implication is that AI is not just improving output. It is changing the shape of the production function itself — the relationship between inputs and outputs. Firms that have mapped AI across their production processes can produce more output with the same resources. This is the textbook definition of total factor productivity growth, and it appears at the individual-firm level in experimental data.

For the venture ecosystem, this has structural consequences. If AI-native firms can reach meaningful revenue milestones with less capital, the traditional logic of venture funding — front-load capital, hire a large team, burn cash to acquire users, raise more capital — may be giving way to something leaner. The Ranger case study in the paper illustrates this explicitly: the founder sells QA testing as a service from day one, builds AI from operational learnings, and moves fundraising from the first step to the last. The entire business model sequence is reordered.

The Recursive Challenge

Here is the part that should concern anyone considering an AI strategy over a multi-year horizon. The mapping problem does not go away once it is solved. It gets harder.

As AI models grow more capable, the set of activities they can perform expands. Each new capability creates new potential applications that firms must discover. The search space grows. The landscape shifts. A firm that has figured out where to deploy today's AI will face the same discovery problem again when next year's models can do more.

This means the mapping problem is not a one-time challenge to be solved and filed away. It is an ongoing organizational capability, the ability to continuously identify where new AI capabilities create value and reorganize production accordingly. Firms that build this capability as a muscle, not a project, will compound their advantage over time. Firms that treat AI integration as a one-shot initiative will find themselves repeatedly stuck at the beginning of the J-curve, never reaching the inflection point.

The paper's experimental window was ten weeks. Whether the treated firms' advantages persist, compound, or decay is unknown. But the logic of the mapping problem suggests that the firms that learned how to search, not just what to find, are most likely to sustain their edge as capabilities evolve.

What to Do About It

The practical implications are clearer than they might first appear.

For firms: AI strategy should not begin with tool selection. It should begin with a systematic examination of the full production process, every chain of activities that converts inputs to outputs, and an honest assessment of where bottlenecks lie, where tasks are complementary, and where AI could change the structure of work rather than merely accelerating it. The case studies in this paper provide a useful template: map the production chain before and after AI, identify who performs each step, and look for opportunities to compress, parallelize, or eliminate entire sequences. The highest-value applications are almost never the most obvious ones.

For policymakers: the dominant AI adoption policy — subsidize tools, distribute credits, train workers in prompting — addresses a friction that this paper suggests is not the binding one. Programs that help managers and entrepreneurs discover where to deploy AI and how to reorganize their operations around it may deliver substantially higher returns. This is harder to scale than distributing API credits, but the experimental evidence suggests it is far more impactful.

For the broader debate: the AI productivity paradox is real, but it is not a verdict on the technology. It is a verdict on the speed at which organizations can solve a search problem over a vast, jagged, and rapidly shifting landscape of possible applications. The technology works. The task-level evidence is unambiguous. The firm-level evidence is now emerging, concentrated among those who have figured out where to apply it. The question is not whether AI will transform production. It is how quickly firms can learn to see where the transformation should happen, and who will help them look.