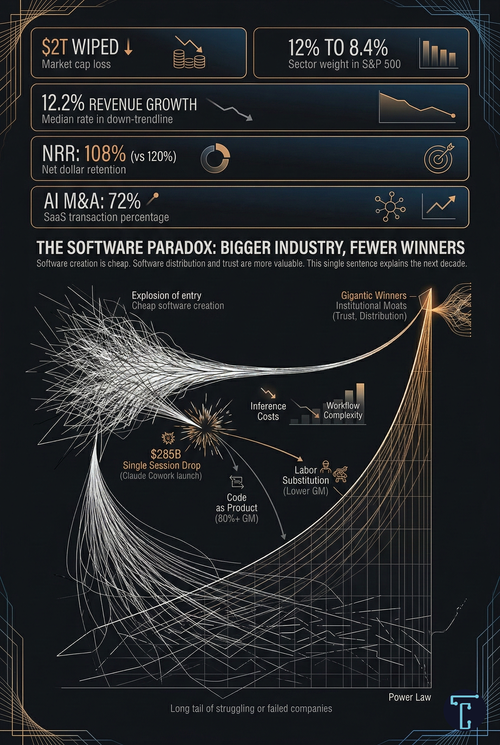

Software creation is becoming cheap. Software distribution and trust are becoming more valuable. That single sentence explains the next decade.

The software industry has just experienced its largest non-recessionary drawdown in over 30 years. According to J.P. Morgan, approximately $2 trillion has been wiped from software market capitalizations since late January 2026, reducing the sector’s weight in the S&P 500 from 12% to 8.4%. The median public SaaS company now trades at roughly 4.8x to 6.3x revenue, a level not seen since the mid-2010s.

The causes are layered, as large sector drawdowns always are. SaaS growth rates have declined every quarter since the 2022 peak. Median revenue growth has fallen to 12.2%. Net dollar retention, the clearest signal of whether existing customers are spending more or less, has dropped from around 120% in 2021 to roughly 108% by late 2024. Rising interest rates, AI infrastructure capex uncertainty, and three years of slowing fundamentals built the pressure. AI capability announcements, most notably Anthropic’s launch of Claude Cowork legal tools on February 3, which erased $285 billion in a single session, crystallized a repricing that had been building over the past few quarters.

The debate that followed has split into two camps. The bears say software is dying. The bulls say software has never been more valuable. Both marshal compelling evidence and arrive at opposite conclusions.

Both are right. And that is the most uncomfortable insight of all. The resolution is not that one side wins. It is that two different things happen simultaneously: AI will produce the most valuable software companies in history while making life structurally harder for the majority of software businesses that exist today. The industry gets larger. The winners get fewer.

The Pattern That Keeps Repeating

This outcome is not novel. It is the dominant pattern in every major technological platform shift in history.

The PC era produced Microsoft and Intel — and killed most minicomputer companies. The internet gave rise to Google and Amazon — and killed AOL, Yahoo, and Netscape. Mobile produced Apple’s and Google’s platform duopolies — and killed BlackBerry and Nokia. Cloud produced AWS and Azure, and compressed traditional enterprise software vendors.

Each transition followed an identical sequence: a new technology lowers barriers to entry, an explosion of startups follows, brutal consolidation ensues, and the final market structure is a power law — a small number of gigantic winners, a modest number of profitable specialists, and a long tail of struggling or failed companies.

The software industry is entering this sequence now. AI is the technology that lowers barriers. The entry explosion is underway — AI-referenced targets accounted for approximately 72% of all SaaS M&A transactions in 2025. The consolidation has not yet occurred. But every historical precedent says it will.

Current valuation data already reveals early sorting. The interquartile range in SaaS valuations has roughly doubled from the pre-COVID period. Top-quartile companies command 10x to 15x revenue. Bottom-quartile companies trade below 2x. The single variable that most clearly separates them is net revenue retention: companies with NRR above 120% trade at about 3x the multiple of those with NRR below 100%. The median masks a bifurcation so severe that two companies with identical revenue receive wildly different valuations based on which side of the line they sit on.

The Deeper Shift: From Product to Labor Substitution

Most analyses of SaaS repricing focus on competition and margin compression. These are real, but they are symptoms of a more fundamental transformation that almost no one is framing correctly.

For two decades, software was a product industry. Companies built code once and sold access to it infinitely. The marginal cost of adding the ten-thousandth user was effectively zero. This produced gross margins above 80% — the signature financial characteristic that separated software from every other sector and justified premium multiples.

AI is turning software into a labor substitution industry. When an AI agent resolves a customer support ticket, generates a legal brief, or processes an insurance claim, the software company is no longer selling access to code. It is selling the replacement of a human task. Every unit of output has a real unit of input cost — the inference compute consumed to deliver the outcome.

This changes three things simultaneously.

The competitive set changes. When you sell code, you compete with other software companies. When you sell labor replacement, you compete with staffing firms, outsourcers, business process operators, and the customer’s own employees. The pricing benchmark shifts from “what does competing software cost?” to “what does the human worker cost?” Your margin depends on the spread between what you charge and what inference costs.

The margin structure changes. Traditional SaaS had near-zero marginal cost, producing over 80% gross margins. Data from Bessemer Venture Partners shows that fast-scaling AI companies average about 25% gross margins, while mature AI-native companies stabilize at around 60%. Meanwhile, 84% of enterprises report AI infrastructure costs eroding gross margins by 6% or more.

The valuation framework changes. Below approximately 65% gross margins, Wall Street’s models reclassify a business from software to services-adjacent and reprice from 10-15x revenue to 2-4x. The transition to AI-delivered outcomes, designed to save software companies from seat-count erosion, may cost them the margin profile that justified their premium valuations.

But — and this is the critical nuance — the margin outcome is genuinely unresolved. Two powerful forces are racing against each other. Inference costs on established models are falling rapidly: OpenAI’s compute margins improved from roughly 35% in early 2024 to approximately 70% by late 2025. Better chips, smaller models, quantization, caching, and intelligent routing are all compressing costs. Cursor has already built proprietary models that run 4x faster than comparable frontier models, with margins projected to improve from 74% to 85% by 2027.

Working against this: frontier models required for complex enterprise workflows are becoming more expensive. Agentic workloads have increased token consumption per task by 10x to 100x. Companies betting on margin recovery through inference deflation may find the goalposts moving faster than the cost curve.

This race is the central uncertainty in the entire software repricing process. Its outcome will determine trillions of dollars in market value. And the honest answer is that nobody — not the bulls, not the bears, not the model providers — knows how it resolves.

What the Bears Get Right — and Wrong

Three structural forces are genuinely compressing parts of the software market.

The option to build changes every negotiation. The cost of prototyping a vertical SaaS tool has collapsed from millions of dollars to near-zero. Most companies will not build their own software, but it is a credible option that puts pressure on pricing in every renewal conversation. This is similar to how open source affected enterprise software pricing in the 2000s. Linux rarely replaced Windows outright, but it forced Microsoft to change pricing and strategy for a decade. AI-enabled prototyping is doing the same: changing negotiations, not behavior.

Nobody has found a pricing equilibrium. Salesforce cycled through three fundamentally different pricing architectures for Agentforce within eight months — from $2 per conversation, to $0.10 per action, to $550 per seat for unlimited usage. This is not confusion in one company. A recent analysis found that 92% of AI software companies now use mixed pricing models precisely because no single model works.

Per-seat pricing is structurally challenged. When AI agents reduce the number of humans who interact with a system, per-seat revenue declines. The transition to outcome-based or consumption-based pricing is necessary but creates its own problems: unpredictable customer bills, complex measurement, and the variable-cost margin pressure described above.

But the bears consistently overstate three mechanisms.

Switching costs are institutional, not technical. The argument that AI agents can navigate screens, therefore eliminating lock-in, misunderstands where enterprise switching costs live. They live in data migration, employee retraining, integration rebuilds, vendor risk assessment, and compliance approvals. For systems of record, these costs remain institutional, and AI does not materially change institutional friction.

Coding was never the hardest part. The Klarna case illustrates this perfectly. After aggressively replacing Salesforce and Workday with an AI-built internal stack and cutting its workforce from 5,000 to under 3,000, Klarna saved roughly $40-50 million annually. Then, within months, the company admitted the approach “went too far” and began hiring humans back after AI tools delivered insufficient quality for customer-facing functions. Klarna’s CEO — initially the poster child for the build-over-buy revolution — was publicly embarrassed by the narrative and explicitly said he did not expect other companies to follow suit. The Klarna arc, from triumphant disruption to embarrassed correction within eight months, captures the entire truth of the build-versus-buy debate in a single case study.

The bears ignore value migration. Markets do not merely destroy value during platform transitions. They migrate it. Every technological shift compressed the previous generation’s moats while creating new ones. The question for investors is not whether returns vanish but where they concentrate next.

What the Bulls Get Wrong

The bull case — most forcefully articulated by a16z’s recent argument that AI is “the best thing that ever happened to the software industry” — has its own blind spots.

TAM expansion does not guarantee margin preservation. The “Service-as-a-Software” thesis is intellectually coherent: if AI-enabled software absorbs labor budgets alongside IT budgets, the addressable market expands from hundreds of billions to trillions. But competing for labor budgets means competing with staffing firms, outsourcers, and the customer’s own employees — with a product that has real marginal cost. A larger addressable market at structurally lower margins is a fundamentally different asset class than what investors have been paying for.

“The industry will win” is not an investment thesis. The airline industry has been winning for consumers since 1978. It has been a catastrophe for most capital allocators almost the entire time. Saying the total software market will be larger tells you nothing about whether any particular company generates adequate returns.

How the Market Restructures

By the early 2030s, the software industry is likely to resolve into four distinct layers, each with different economics. The value distribution across these layers is genuinely uncertain — history shows that application layers can capture enormous value, not just infrastructure — but the structural logic of each layer is already visible.

Infrastructure and Model Providers. NVIDIA, the hyperscalers, and the foundation model companies. These capture value because much of the ecosystem depends on them. Hyperscalers plan to spend $660 billion on AI infrastructure in 2026 alone. But dependence does not automatically guarantee outsized returns — it depends on competition, commoditization of models, and whether the infrastructure layer itself becomes a margin-compressed utility. The outcome here is less predetermined than most analysts assume.

AI-Native Platforms. Enterprise orchestration and governance platforms that become the control layer for AI deployment. ServiceNow is the current archetype: $13.3 billion in annual revenue in 2025, 98% renewal rates, 19-20% subscription growth guidance for 2026, and operating margins expanding to 32%. Its positioning as an “AI Control Tower” — the governance layer that manages agents from any vendor — is a bet that trust and orchestration command premium valuations because they deliver something that cannot be easily replicated: compliance, auditability, and institutional reliability. Whether this positioning sustains premium multiples over time remains an open question — but the early evidence is encouraging.

Specialized Vertical Software. Companies with proprietary data, deep domain expertise, and workflows inseparable from the organizations they serve. Legal AI, medical AI, financial data, and security. These companies survive because their value comes not from generic code but from accumulated institutional knowledge — the templates, review processes, regulatory frameworks, and domain-specific reasoning that no general-purpose model replicates without years of co-evolution with customers. Process power may be the most durable moat of the AI era, because the hard part was never raw intelligence. It was knowing what to do with it.

Commodity and Mid-Market Software. This is where the repricing hits hardest, but it is important to be precise about what the commodity means. It does not mean worthless. Many mid-market SaaS tools provide genuine operational value, solve real problems, and maintain loyal customer bases. What it means is that these companies face structurally declining pricing power: the barrier to competitive entry has dropped dramatically, the option to build pressures every renewal negotiation, and the value proposition is harder to defend as AI raises the baseline of what every tool can do. Some of these companies will survive by deepening integrations, owning niche workflows, or building genuine customer relationships that transcend the code. Others will compress to utility-like margins. Sorting within this layer will be as important as sorting across layers.

The Organizing Principle

If there is a single thesis that reconciles the bear and bull cases, it is this: software creation is becoming cheap, but software distribution and trust are becoming more valuable.

When something becomes easy to produce, scarcity migrates elsewhere. The printing press made books cheap and made attention valuable. The internet made content cheap and made distribution valuable. Cloud made servers cheap and made platforms valuable. AI is making code cheap — and making trust, integration, ecosystem position, and institutional relationships valuable.

These are the assets that separate winners from losers in the AI era. They are institutional, not technical. AI barely touches them. In fact, AI may make them more valuable — because when there are infinite options, aggregation around trusted platforms becomes more important, not less. When anyone can build a tool, the question shifts from “can we build this?” to “do we trust this enough to run our business on it?”

This is why the SaaSpocalypse narrative, while directionally correct about parts of the market, is wrong about the industry as a whole. The companies that own distribution and trust will not merely survive. They will be larger and more dominant than anything the SaaS era produced, because they will serve a larger market, enabled by AI, while their competitive position strengthens as code around them becomes commoditized.

What Comes Next

There will be more software in the world five years from now than there is today. There will be fewer software companies. The industry’s total value will be larger. Its value per company will be more concentrated.

The strategic imperative for every software executive is not to add AI features and hope for the best. It is to answer, with uncomfortable honesty, whether their company’s advantages are institutional or merely technical. Institutional moats — ecosystem position, workflow integration, proprietary data, regulatory trust, enterprise distribution — are strengthened by the AI transition. Technical moats — code complexity, feature breadth, interface familiarity — are weakened or eliminated by it.

The companies that understand this distinction have time to act. The companies that do not will discover it through their stock price.

The sorting has just begun. But the pattern — power-law concentration after explosive entry — is as old as technology capitalism itself. And the question it poses is always the same: not whether the industry survives, but whether your company is among the few that emerge stronger, or the many that do not.