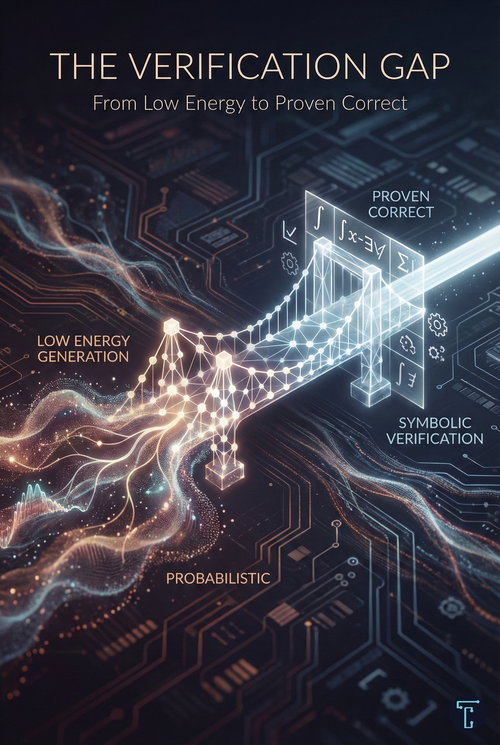

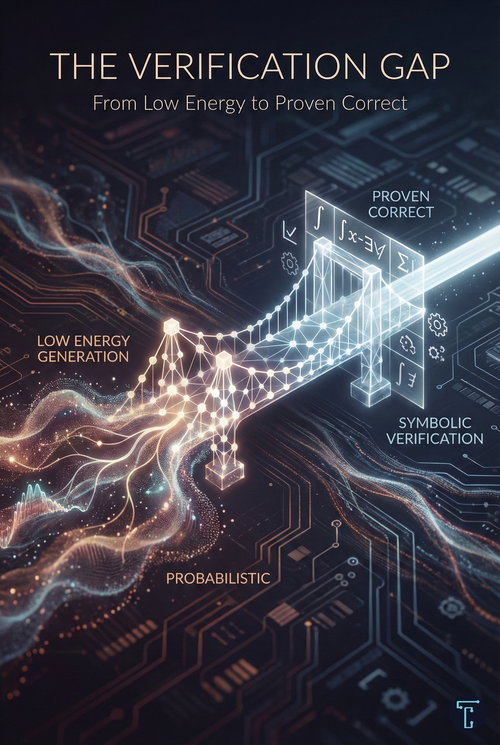

The Verification Gap: From Low Energy To Proven Correct

Generation is cheap. Verification is what creates trust. The systems that matter won't be the ones that generate plausible outputs—they'll be the ones that can prove their outputs are right.

Generation is cheap. Verification is what creates trust. The systems that matter won't be the ones that generate plausible outputs—they'll be the ones that can prove their outputs are right.

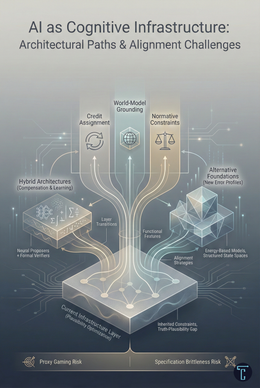

Yann LeCun left Meta and raised $3.5B on a radical thesis: autoregressive LLMs are a dead end. The future is world model simulation, not token prediction. Language survives the shift. The current architecture may not.

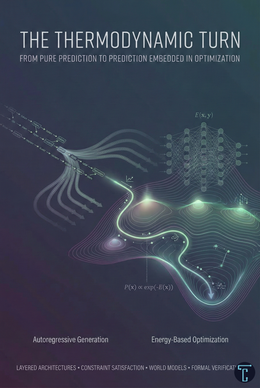

The most significant capability gains in AI aren't coming from bigger models—they're coming from embedding prediction inside optimization. Generation is becoming a proposal step. The thermodynamic turn is already underway.

LLMs optimize plausibility, not truth. RAG and verification compensate, but don't change the architecture. Real progress requires systems that learn from outcomes over time or alternative foundations that sidestep token prediction entirely. Different error economics.

Two rival CEOs. One warning. At Davos 2026, Anthropic's Amodei and DeepMind's Hassabis agreed: AI will overwhelm our ability to adapt within years. The question is whether we shape the transition, or suffer it by default.