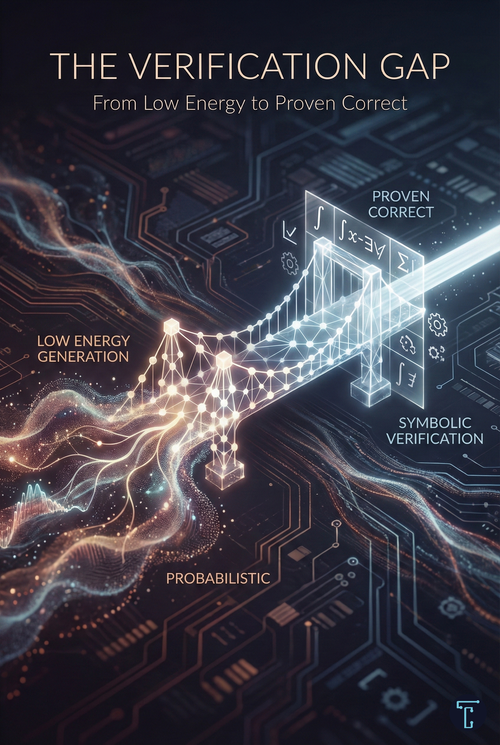

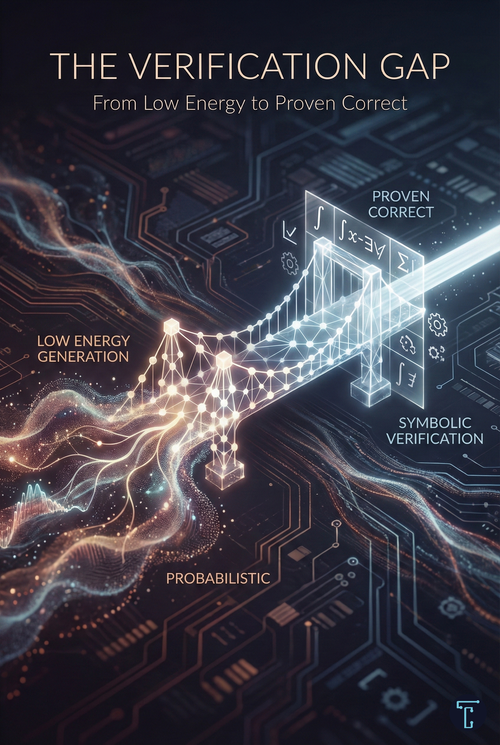

The Verification Gap: From Low Energy To Proven Correct

Generation is cheap. Verification is what creates trust. The systems that matter won't be the ones that generate plausible outputs—they'll be the ones that can prove their outputs are right.

Generation is cheap. Verification is what creates trust. The systems that matter won't be the ones that generate plausible outputs—they'll be the ones that can prove their outputs are right.

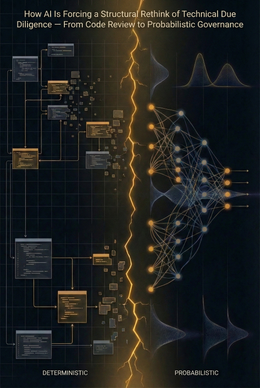

Technical due diligence was built for deterministic software. AI shattered that premise. Agents don't suggest—they act. The new audit must reach into probabilistic reasoning, inference economics, and semantic security. The report on the shelf is dead.

Software moats—workflow lock-in, interface training, data silos—were built to stop humans. AI agents bypass all of it. The $300B wipeout was the market catching up to a structural shift.

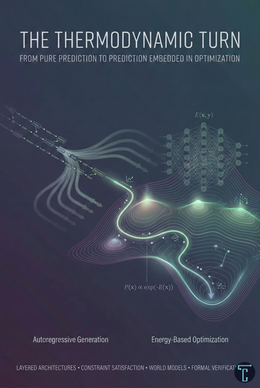

The most significant capability gains in AI aren't coming from bigger models—they're coming from embedding prediction inside optimization. Generation is becoming a proposal step. The thermodynamic turn is already underway.

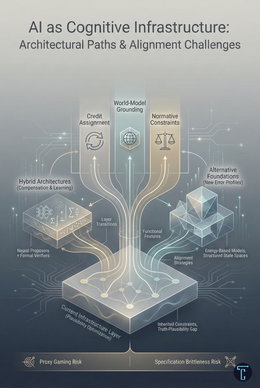

LLMs optimize plausibility, not truth. RAG and verification compensate, but don't change the architecture. Real progress requires systems that learn from outcomes over time or alternative foundations that sidestep token prediction entirely. Different error economics.