Physical AI and the End of Token Prediction as the Core of Machine Reasoning

"If you are interested in human-level AI, don't work on LLMs."

When Yann LeCun, Turing Award laureate, founder of Advanced Machine Intelligence Labs, and one of the godfathers of deep learning, says something this provocative, it demands careful interpretation.

In November 2025, LeCun left Meta after 12 years as Chief AI Scientist to launch AMI Labs, a startup targeting a $3.5 billion valuation before shipping a single product. His thesis: the path to artificial general intelligence cannot run through autoregressive language models. They lack world models. They cannot predict the consequences of actions. They don't operate in continuous time.

His departure wasn't just a career move—it was a declaration. One of the most respected figures in AI left one of the world's best-funded AI labs because he believes the entire industry is built on the wrong foundation.

But here's where the conversation gets imprecise, and where precision matters most.

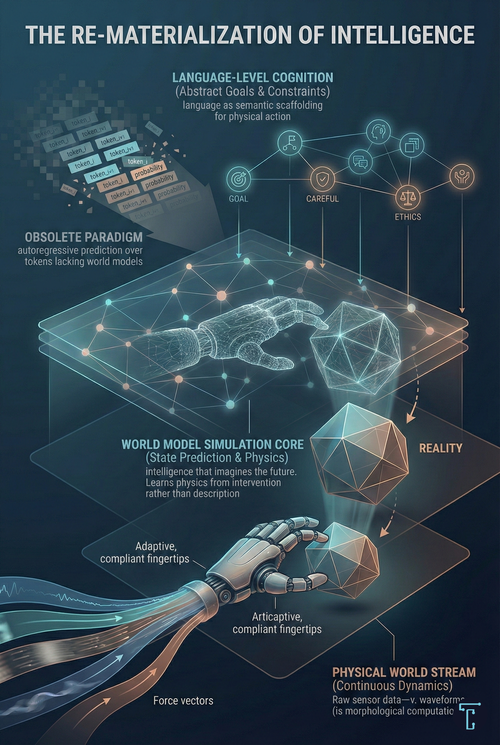

The debate is often framed as "LLMs vs. Physical AI." That framing obscures the real discontinuity. The paradigm break is not between language and physical intelligence. It's between token prediction and world model simulation as the core reasoning substrate.

LeCun isn't arguing that language doesn't matter. He's arguing that autoregressive prediction over tokens is the wrong architecture for intelligence that understands the world. Language-level cognition may remain essential, but it will likely migrate to new architectures grounded in physics rather than in statistical models of text.

This is the re-materialization of intelligence. And understanding what it actually claims, and what it doesn't, matters for everything from how we invest to how we build.

The Legitimate Critique - What LLMs Actually Cannot Do

Let's be precise about what LeCun gets right, because the critique of pure text-based intelligence is substantive, not rhetorical.

Large Language Models predict the next token based on statistical patterns in text. They know that "apple" correlates with "red," "fruit," and "crunchy." But they have no native access to the reality the symbol represents—no direct understanding of what it feels like to grip an apple, how much torque is required to lift it, or what happens when it falls.

This is the Symbol Grounding Problem, and it creates real limitations:

- LLMs don't natively experience causality. They can describe that pushing a glass causes it to fall, but they've never pushed anything. The causal model is inherited from text, not learned from intervention.

- They don't operate in continuous time. The physical world flows as a high-dimensional, noisy, continuous stream of sensory data. Text arrives in discrete tokens.

- They're weak at counterfactual prediction without scaffolding. "What would have happened if I had braked earlier?" requires a physics simulator in the loop, not just pattern matching.

- Their weights are frozen after training. They cannot learn continuously from new experiences as biological intelligence does.

This isn't anti-LLM rhetoric. It acknowledges a fundamental asymmetry:

Language is a compression of reality, not reality itself.

Text describes physics after the fact. Intelligence that acts in the world needs physics before action. You cannot learn to catch a ball by reading about it.

The Real Discontinuity - LLMs vs. Language (A Crucial Distinction)

Here's where most commentary on Physical AI goes wrong, in both directions.

The "Physical AI replaces LLMs" crowd conflates the current architecture (autoregressive token prediction) with the cognitive capacity (language-level reasoning). They assume that rejecting transformers means rejecting symbolic abstraction.

The "Physical AI completes LLMs" crowd understates the architectural discontinuity. They treat world models as an add-on to the existing stack rather than a fundamentally different learning regime.

Both miss the crucial distinction: LLMs are contingent; language is not.

Let me be precise about what's actually different:

The Paradigm Discontinuity

This is not a variation on a theme. It's a fundamental break in what "learning" and "reasoning" mean.

| Dimension | Autoregressive LLMs | World Model Paradigm |

|---|---|---|

| Time | Discrete tokens Sequential, step-by-step processing | Continuous dynamics Fluid, real-time state evolution |

| Learning Signal | Text corpora Internet-scale written language | Video, sensory data, physics Embodied interaction with reality |

| Objective | Token likelihood Predict the next word | State prediction Predict the next world state |

| Causality | Described Inherited from text descriptions | Experienced Learned from intervention |

| Reasoning | Pattern matching over symbols Statistical correlation in token space | Simulation in latent space Physics-grounded prediction |

This is not a variation on a theme. It's a paradigm break at the level of what "learning" and "reasoning" mean.

But this is critical: the break is between token prediction and state prediction, not between language and intelligence.

LeCun is rejecting autoregression as a learning principle. He is not rejecting symbolic abstraction as such. The capacity for language-level cognition, including goals, constraints, explanations, and cross-domain abstraction, may migrate to new architectures. But it won't disappear.

What Language Actually Does (That World Models Can't) - The Three Kinds of Reasoning

Reasoning is not unitary. There are at least three distinct types relevant to AGI:

Causal-Physical Reasoning

- What happens if I push this glass?

- How do I balance while walking on ice?

- What trajectory will this ball follow?

World models dominate here. You cannot learn physics from text descriptions—you need grounded simulation.

Instrumental-Planning Reasoning

- What sequence of actions achieves my goal?

- How do I decompose "clean the kitchen" into sub-tasks?

- What's the optimal path through this environment?

World models contribute significantly. Planning requires predicting future states.

Abstract-Normative-Symbolic Reasoning

- What does "careful" mean in this context?

- Is this action ethical?

- How does this situation relate to the previous one?

- What constraints must I satisfy?

Here's where world models are weak, and where language remains essential.

World models don't naturally express:

- Value alignment ("don't harm," "be careful," "respect consent")

- Normative constraints (legal, ethical, cultural)

- Cross-domain abstraction ("treat this surgery like that one, but inverted")

- Explanation, justification, corrigibility

- Multi-agent coordination

Language is not "just an interface." It is the highest-bandwidth substrate we have for propagating abstract constraints across a system. A robot that cannot understand "be gentle with the elderly patient" in all its contextual nuance is not generally intelligent; it's merely capable.

The honest synthesis:

Physical AI introduces a new core paradigm: intelligence grounded in simulation of a world model rather than token prediction. Autoregressive LLMs are unlikely to be that core. But language-level cognition—possibly implemented in future, non-autoregressive architectures—will remain essential for abstract reasoning, alignment, and coordination.

This is not "LLMs are the foundation" or "LLMs are obsolete." It's "the reasoning core is shifting, but symbolic abstraction survives the transition."

World Models and the Architecture of Prediction - From Correlation to Causation

LeCun's most important contribution to this discourse isn't the critique of LLMs—it's the positive proposal for what comes next: World Models.

The key insight is deceptively simple: intelligence requires the ability to imagine the future. An agent that can simulate "if I push this glass, it will fall and shatter" doesn't need to actually shatter glasses to learn physics. It can plan, reason counterfactually, and avoid catastrophic mistakes.

This is where LeCun's JEPA (Joint-Embedding Predictive Architecture) comes into play. Unlike generative models that try to predict every pixel or token, JEPA makes predictions in an abstract representation space. It learns to predict the essential dynamics of a situation while discarding irrelevant noise.

The efficiency gains are substantial. Meta's V-JEPA model, trained on 2+ million unlabeled videos, achieves 81.9% accuracy on Kinetics-400 action recognition with a frozen backbone and no fine-tuning required. I-JEPA requires roughly 5x fewer training iterations than comparable approaches.

But here's the integration point: World models and language models are not competitors. They are complementary.

- Language models provide semantic scaffolding—what "clean" means, what "careful" implies, and what the goal state looks like.

- World models provide the physics simulation—what happens when you move your arm this way, what forces are at play, and what the next state will be.

The robot that receives the instruction "carefully pour the coffee" needs:

- A language model to parse the intent and constraints

- A world model to simulate the fluid dynamics

- A control policy to execute the pour

Neither language nor physics alone suffices. The stack requires both.

The Dual-Process Architecture - System 1 and System 2 for Machines

One of the most elegant engineering patterns emerging in Physical AI is the dual-process architecture, inspired by Daniel Kahneman's model of human cognition.

The challenge is fundamental: how do you combine the semantic richness of large foundation models (which reason about meaning but are slow) with the real-time demands of physical control (which must react in milliseconds)?

The answer is architectural separation:

System 2 (Slow, Deliberative)

- Function: Semantic understanding, task decomposition, long-horizon planning

- Frequency: 0.1-5 Hz (seconds between decisions)

- Technology: Large Vision-Language Models, Foundation Models

- Analogy: The prefrontal cortex

System 1 (Fast, Reactive)

- Function: Sensorimotor control, reflexes, balance, grip adjustment

- Frequency: 50-1000 Hz (milliseconds between actions)

- Technology: Specialized control policies, smaller neural networks

- Analogy: The cerebellum and spinal cord

Figure AI's Helix architecture exemplifies this approach. An onboard VLM running at ~7-9 Hz processes visual scenes for semantic understanding. A reactive visuomotor policy running at 200 Hz translates that understanding into precise whole-body movements.

This isn't speculative philosophy. It is an engineering reality:

- You cannot run a 70B-parameter model at 500 Hz

- You cannot hard-code every edge case

- You must decompose cognition by timescale

The robot can be both intelligent (understanding "pick up the apple") and agile (adjusting its grip in 5ms when the apple slips). This decoupling resolves what once seemed like an impossible tradeoff.

Companies like Figure AI, Amazon, and Foxconn are doing exactly this—whether or not they use Kahneman's language.

Continuous-Time Intelligence - Where New Architectures Actually Matter

The physical world doesn't arrive in discrete tokens. Sensors have jitter. Frames drop. Forces vary continuously. The world is a differential equation, not a spreadsheet.

This has driven interest in architectures designed for continuous-time processing, including Liquid Neural Networks (LNNs)—networks in which neurons are modeled as differential equations with input-dependent time constants.

The genuine advantages are:

- Natural handling of irregular data: No need to interpolate missing sensor readings

- Efficiency in specific domains: Demonstrated compactness for certain control tasks

- Theoretical alignment with physics: The same mathematical language (ODEs) that describes physical systems

However, let's be precise about claims. Statements like "20 neurons outperform millions of CNN parameters" are task-specific, highly constrained benchmarks. They are impressive for what they demonstrate: continuous-time models can be remarkably efficient for certain control problems, but they are not general-purpose replacements for deep networks.

What is true:

- Continuous-time models matter for Physical AI

- Event-driven computation is coming

- Efficiency will dominate mobile and robotic AI

What's not yet proven:

- That these architectures scale cleanly to rich semantic tasks

- That they replace deep networks rather than complement them

This early-stage technology shows real promise. It deserves serious attention without overclaiming.

The Body as Collaborator - Morphological Computation and Material Intelligence

Here's a concept that expands how we think about the distribution of intelligence: the body itself can compute.

This is the thesis of Morphological Computation. In a rigid robot, the controller must calculate the precise trajectory of every joint to grasp an object. In a soft robot hand made of compliant elastomers, the material naturally conforms to the shape of the object. The computation of "how to grasp" isn't performed entirely by silicon—part of it is performed by the polymer chains of the gripper itself.

This isn't a metaphor. Researchers quantify morphological computation using information theory, measuring how much a body's current state contributes to its future state independent of controller signals.

The implications:

- Physical Reservoir Computing: Using a robot's body—its skeleton, its tendons, its flexible elements—as a computational substrate

- Self-healing materials: Polymers that autonomously repair damage

- Bio-hybrid systems: Integrating living biological tissue with synthetic engineering

We are witnessing the emergence of a design philosophy where intelligence is distributed across the brain, body, and environment. Each layer contributes to the overall cognitive capacity.

But this is crucial: distributed embodied intelligence still needs a semantic layer. A soft gripper that naturally conforms to objects is remarkable. A soft gripper that understands which object to grasp, why, and what to do next requires integrating language, planning, and physical capabilities.

The Industrial Reality - Physical AI Is Already Delivering ROI

This isn't theoretical. Physical AI is currently deployed at scale, delivering measurable returns.

Amazon operates the world's largest robotic fleet:

- Sparrow uses vision-language principles to pick millions of distinct inventory items without item-specific programming.

- Proteus, the first fully autonomous mobile robot, navigates around humans fluidly.

- Results: 25% improvement in fulfillment efficiency, 25% faster delivery times

Foxconn has deployed AI-powered robotic workforces for precision tasks:

- Robots train in digital twins before real-world deployment.

- Adaptive force control provides real-time tactile feedback.

- Results: 40% reduction in deployment time, 15% lower operational costs

Yet a "scaling gap" persists. Despite 89% of executives planning to implement AI in production, only 16% have achieved their targets. The barriers are organizational: infrastructure deficits, data silos, and talent shortages, not primarily technical.

The technology works. Deploying it at scale requires new operational models, new roles (Robot Supervisors, AI Trainers, Fleet Coordinators), and new organizational capabilities.

The Challenges That Remain - Real Obstacles, Not Rhetorical Ones

Physical AI faces challenges that don't exist in the purely digital realm. When ChatGPT hallucinates, no one gets hurt. When a Physical AI agent fails, the consequences are real.

The Sim2Real Gap: Simulators use simplified physics that fails to capture soft-body deformation, complex contacts, and sensor noise. A policy that exploits simulation quirks fails in reality.

Emerging solutions:

- Differentiable Physics Engines (NVIDIA Newton, MIT ChainQueen) that allow gradients to backpropagate through physics

- Domain Randomization that varies parameters to force robust policies

- Gen2Real techniques using generative AI for photorealistic simulation

Data Scarcity: LLMs trained on the entire internet. There's no equivalent corpus of robot actions. Solutions include synthetic data generation, scaled teleoperation, and collaborative initiatives like Open X-Embodiment.

The Energy-Intelligence Paradox: Sophisticated models require heavy compute. Mobile robots require battery efficiency. This drives adoption of edge-optimized architectures, neuromorphic computing, and efficient continuous-time models.

Safety and Alignment: Physical agents that can't explain their reasoning, accept correction, or align with human intent are deployment risks. This is where language capability becomes a safety feature, not just a convenience.

The Investment Landscape - Following the Capital

The capital markets have rendered their verdict. In 2025, Physical AI startups raised over $16 billion. Notable raises:

- AMI Labs (Yann LeCun): Targeting $3.5B valuation for world models and physical AI

- Figure AI: $675M for general-purpose humanoid robotics

- Liquid AI: Series A for continuous-time neural architectures

- Neuralink: $650M for brain-machine interfaces

- Physical Intelligence: Building unified robot foundation models

Market projections show artificial muscles growing from $1.98B (2024) to $3.44B by 2030. Soft robotics expected to reach $6.59B by 2032 at 18.7% CAGR.

The signal: when 93% of Silicon Valley's tech investment flows into AI, and that investment increasingly targets embodied systems, it reflects a collective bet on where value creation is heading.

The physical economy—manufacturing, logistics, healthcare, exploration—dwarfs the digital economy. Informational AI automates cognitive work. Physical AI automates physical work. The total addressable market is measured in trillions.

The Synthesis - What's Actually Happening

Let me offer a more precise thesis than either "Physical AI completes LLMs" or "Physical AI replaces LLMs."

The reasoning substrate is shifting. The capacity for language survives the shift.

Here's the architectural picture—but note what's changed from the "integration" framing:

The Shifting Architecture of Intelligence

The reasoning substrate is shifting. The capacity for language survives the shift.

Goals, constraints, ethics, explanation

State prediction, physics simulation, causal reasoning, counterfactual inference

Multimodal sensing, motor control, real-time adaptation, embodied execution

The key insight: Language-level cognition doesn't disappear. But it may no longer be implemented as an autoregressive prediction over text tokens. Instead, it may emerge from systems grounded in world model simulation, systems that learn the meaning of "careful" by experiencing the physics of fragility, not by correlating tokens.

This is not "LLMs are the foundation" (which understates the discontinuity). This is not "LLMs are obsolete" (which conflates architecture with capability). The reasoning core shifts from token prediction to world simulation. Language-level abstraction persists, but its implementation changes.

LeCun is betting the core shifts entirely. OpenAI is betting that token prediction scales to general reasoning. One of them is wrong about fundamentals.

The Convergence And The Divergence - Where The Major Labs Actually Stand

Despite surface-level similarities, the major AI labs are pursuing fundamentally different bets on what intelligence requires.

OpenAI has doubled down on scaling autoregressive models. GPT-4, GPT-5, and their successors represent the thesis that token prediction, scaled sufficiently, will yield general intelligence. Their robotics investments are real but secondary to the core language model strategy.

DeepMind maintains a hybrid approach—game-playing agents, robotics research (RT-2, Gato), and Gemini's multimodal capabilities. They're hedging between paradigms.

Meta has pursued both LLMs (LLaMA) and world models (JEPA). But LeCun's November 2025 departure revealed the internal tension. Meta's strategic pivot toward rapid LLM deployment under new leadership suggests they're choosing sides—and LeCun chose the other side.

AMI Labs (LeCun's new venture) represents the purest bet on the world model paradigm. No autoregressive token prediction at the core. Learning from video and sensory data. Physics simulation as the reasoning substrate.

The Honest Assessment:

The industry is not converging. It's diverging on a fundamental question: Is token prediction the path to AGI, or a dead end?

LeCun's position is clear: dead end. His $3.5B raise is a bet that the entire LLM scaling paradigm will be superseded by world models within 3-5 years.

OpenAI's position is equally clear: keep scaling. Their continued investment in larger language models is a bet that emergent capabilities will eventually yield grounded reasoning.

This is not a debate that can be resolved by argument. It will be resolved by empirical results over the next 3-5 years.

What we can say now is that the architectural discontinuity is real. Token prediction and world model simulation are not variations on a theme. They are different theories of intelligence.

What This Means for Builders - Practical Implications of the Paradigm Question

If the paradigm is genuinely shifting—if token prediction is not the path to general reasoning—the implications are significant.

For Robotics Companies: The question is not "how do we add LLMs to our robots?" The question is "what architecture will language-level cognition ultimately live in?" Build for modularity. The semantic layer may need to be swapped as architectures evolve.

For LLM Companies: If LeCun is right, the moat you're building may be temporary. Grounding matters—not as an add-on, but potentially as the new foundation. Multimodal training, tool use, and world model integration aren't features. They may be survival strategies.

For Investors: This is not a "which company wins?" question. It's a "which paradigm wins?" question. The right bet depends on whether you believe token prediction scales to general reasoning (bet on OpenAI, Anthropic) or whether world models are necessary (bet on AMI Labs, Physical Intelligence, robotics-first companies). Hedging across paradigms is rational given uncertainty.

For Policymakers: Safety implications differ across paradigms. Autoregressive LLMs face alignment challenges related to language manipulation. World-model systems have alignment challenges centered on physical action. The regulatory frameworks need to evolve for both, but they're different problems.

For Researchers: The most important questions are at the paradigm boundary:

- Can world models achieve the abstract reasoning currently monopolized by language models?

- Can autoregressive prediction, scaled sufficiently, yield genuine causal understanding?

- What does language-level cognition look like when implemented in non-autoregressive architectures?

These are empirical questions. The next 3-5 years will generate data to answer them.

What Becomes Possible - The Emergent Capabilities No One Is Talking About

Here's what the "LLMs vs. Physical AI" debate misses entirely: the integrated stack enables capabilities neither layer could achieve on its own. This isn't addition; it's multiplication.

One-Shot Physical Learning

Today's robots require thousands of demonstrations to learn a task. But consider what happens when you combine language understanding with physical capability:

A human says, "Pick up the egg gently—it's fragile."

The language model understands that "fragile" implies force constraints. The world model simulates grip pressure and failure modes. The control policy executes with appropriate compliance. The robot succeeds on the first attempt at a task it has never performed.

This is instruction-following in the physical world: the ability to learn from description rather than demonstration. It addresses the data-collection problem that has bottlenecked robotics for decades.

Autonomous Scientific Discovery

LLMs can generate hypotheses. Physical AI can test them.

Imagine a system that can:

- Read the scientific literature (language)

- Generate novel hypotheses about material properties (reasoning)

- Design experiments to test them (planning)

- Physically execute those experiments (action)

- Interpret results and iterate (learning)

This isn't science assistance. It's science automation. The loop that currently requires PhD students, lab equipment, and years of iteration could run continuously and autonomously at the speed of robotic execution.

We are approaching the point where AI doesn't just help humans discover—it discovers.

Self-Improving Morphology

Current robots have fixed, human-designed bodies. But Physical AI combined with advanced manufacturing opens a stranger possibility: machines that redesign themselves.

A robot that understands its own kinematics (world model), can reason about alternative designs (language + planning), and can fabricate new components (physical capability) could iteratively improve its own body for specific tasks.

This is evolution without biology: Lamarckian inheritance for machines. The robot that learns to climb stairs could literally redesign its legs based on what it learned.

Persistent Exploration Agents

Consider the requirements for exploring Europa's subsurface ocean:

- No real-time communication (light-speed delay)

- No human intervention for repairs

- Unknown environment requiring adaptation

- Multi-year mission duration

This is impossible for current robots. But an agent with:

- Language capability (to interpret high-level mission objectives)

- World models (to predict novel physics in alien environments)

- Embodied adaptation (to respond to unexpected conditions)

- Self-repair (through soft robotics and bio-hybrid materials)

...could operate autonomously for years in environments humans will never reach. Physical AI is how we extend human agency beyond human survival.

The Inversion of Manufacturing

Today: Humans design products → Robots manufacture them.

Tomorrow: Humans describe outcomes → AI designs and manufactures solutions.

"I need something that keeps my coffee warm for 4 hours, fits in my bag, and costs under $20 to produce."

A system with language understanding, world models (thermal dynamics, material properties), and physical manufacturing capability could design, prototype, test, and iterate—potentially producing novel solutions no human designer would have conceived.

This is generative manufacturing. Not 3D printing from human CAD files, but end-to-end creation from intent to object.

Collaborative Intelligence at the Speed of Thought

The integration of language and physical capability enables a new mode of human-AI collaboration:

A surgeon describes a novel procedure in natural language. The robotic system understands the intent, simulates the approach in its world model, identifies risks, suggests modifications, and—with approval—executes with superhuman precision.

A scientist sketches an experimental apparatus on a whiteboard. The AI interprets the sketch, understands the purpose, fills in engineering details, and builds it overnight.

An architect describes a feeling—"I want the space to feel like standing in a forest"—and the system generates, simulates, and could eventually construct physical spaces optimized for that phenomenological experience.

This is human creativity amplified by machine capability at every stage, from conception to physical reality.

The Civilizational Implications - What Changes When Machines Can Act

The forward-looking perspective requires acknowledging that Physical AI is not just a technology upgrade. It's a phase transition in what machines can be.

The End of the Skill Scarcity Assumption

Every economic system in human history has assumed that skilled physical labor is scarce. Artisans, surgeons, pilots, chefs—expertise embodied in human hands has always been limited by human training time and human lifespans.

Physical AI breaks this assumption. When embodied skills can be:

- Learned once and replicated infinitely

- Transferred across robot bodies

- Improved through continuous autonomous practice

...the economics of physical work transform as dramatically as the printing press transformed the economics of text.

This is not "robots taking jobs." It's the replicability of embodied expertise, and it changes everything from healthcare access to manufacturing geography to the meaning of craft.

The Expansion of Human Presence

Humans are fragile. We need oxygen, moderate temperatures, acceptable radiation levels, and gravity within a narrow range. This constrains where we can go and what we can do.

Physical AI extends human agency into:

- Deep ocean environments

- Planetary surfaces

- Disaster zones

- Microscale and nanoscale manipulation

- Hazardous industrial processes

Every environment hostile to human bodies becomes accessible to human intent, mediated by embodied AI. The sphere of human action expands beyond human survival.

The Acceleration of Physical Knowledge

Science progresses through the empirical loop: hypothesize, test, observe, refine. The bottleneck has always been physical experimentation—slow, expensive, dangerous.

Physical AI compresses this loop. When experiments can be designed by AI, executed by robots, and analyzed in integrated systems, the pace of physical discovery accelerates.

Materials science. Drug discovery. Energy systems. Climate interventions. Every field constrained by experimental throughput becomes unblocked.

We may be approaching an era in which physical knowledge accumulates faster than humans can absorb it, where AI not only discovers but also becomes the primary repository of understanding of the material world.

The Timeline Question

When Does This Matter?

LeCun's decision to leave Meta and raise $3.5 billion for AMI Labs represents a massive bet that systems reaching human-level intelligence in the physical world will emerge within the next decade. That timeline is debatable—but the capital flowing toward this thesis is not.

What's already here (2024-2026):

- Industrial deployment at scale (Amazon, Foxconn)

- Humanoid prototypes demonstrating integrated language-action capability (Figure, 1X)

- World models achieving meaningful physics prediction

- Dual-process architectures in production

What's emerging (2026-2028):

- General-purpose robot policies trained on pooled data (Open X-Embodiment and successors)

- Consumer-grade embodied assistants with natural language interfaces

- Sim2Real gaps closing through differentiable physics and Gen2Real

- First wave of truly multimodal foundation models (language + vision + action)

What's uncertain (2028+):

- Whether continuous learning at scale is achievable

- Whether alignment techniques transfer from digital to physical domains

- Whether economic models support mass deployment

- How quickly regulatory frameworks adapt

The safest prediction: the integration of language, world models, and embodied control will proceed faster than most expect because the component technologies are further along than public discourse suggests.

The companies and researchers who understand this as a stack integration problem—not a paradigm war—will have significant advantages.

The Threshold We're Crossing

For sixty years, artificial intelligence has been synonymous with symbol manipulation: pattern matching over discrete representations, from expert systems to deep learning to large language models. The computationalist paradigm treated intelligence as fundamentally algorithmic: the right function, applied to the right data, at sufficient scale.

Physical AI challenges this at the root.

The paradigm break is not cosmetic. It's not "LLMs plus robots." It is a different theory of what reasoning is:

- The old theory: Intelligence is pattern matching over representations. Scale the patterns, scale the intelligence.

- The new theory: Intelligence is a simulation of world dynamics. Ground the simulation in physics, and reasoning emerges from prediction.

LeCun has bet his reputation and billions on the new theory. The rest of the industry is still largely committed to the old one. Both cannot be right about what constitutes the core.

But here's what survives the paradigm break:

Language is not going away. The capacity for symbolic abstraction—goals, constraints, ethics, explanation, coordination—remains essential for any system we'd call generally intelligent. What's shifting is where that capacity lives architecturally.

Today, language-level cognition resides in autoregressive transformers that predict tokens. Tomorrow, it may live in systems grounded in world models, where language is learned from physics rather than physics being described by language.

The most defensible thesis:

Physical AI introduces a new core paradigm: intelligence grounded in simulation of a world model rather than token prediction. Autoregressive LLMs—as currently architected—are unlikely to be that core. But language-level cognition, possibly implemented in future non-autoregressive architectures, will remain essential for abstract reasoning, alignment, and coordination.

This is not "LLMs will be fine" or "LLMs are dead." The reasoning substrate is shifting, but symbolic abstraction survives the transition.

The economic implications are measured in trillions. Physical AI automates physical labor across manufacturing, logistics, healthcare, and exploration.

The scientific implications are measured in accelerated discovery—when experiments can be designed, executed, and analyzed by integrated AI systems, the pace of physical knowledge accumulation transforms.

The civilizational implications are measured in the expansion of what humanity can reach, build, and become.

But the intellectual implication is perhaps the most profound:

We are witnessing the first serious challenge to the idea that intelligence is computation over symbols.

LeCun may be right. He may be wrong. The next five years will tell. But the question he's asking, whether token prediction is a path to understanding or a sophisticated dead end, is the most important question in AI right now.

The re-materialization of intelligence is not a product roadmap. It's a hypothesis about the nature of the mind.

And we're about to find out if it's true.