The thermodynamic turn set a trajectory: AI systems are evolving from pure prediction to prediction embedded in optimization. Energy-based models evaluate entire configurations instead of committing tokens one at a time. Inference-time scaling implements search within autoregressive shells. The architecture is becoming layered: proposal, evaluation, iteration, grounding.

But this framing leaves a critical question unanswered: what does it actually mean for a neural system to provide formal guarantees?

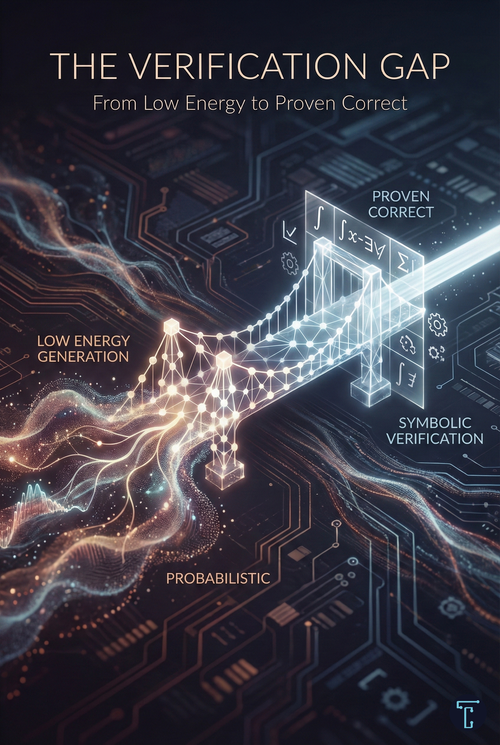

Claims that energy-based models excel at "verification" and "provable correctness" are frequent. They appear in position papers, research proposals, and investor decks. Yet the connection between low energy and logical certainty is rarely made explicit. The gap between thermodynamic intuition and the formal machinery needed for genuine verification is where the real work remains.

The Confidence Problem

Consider the operational reality of deploying language models in high-stakes domains. A model trained on legal documents generates contract language. A code synthesis system produces functions for medical device firmware. A financial assistant recommends portfolio adjustments.

In each case, the output looks plausible. The language is fluent. The code compiles. The reasoning chain appears coherent. But what does it mean to say the model is "95% confident" in its output?

Statistical confidence, as expressed by neural network probability distributions, is not the same as logical certainty. A model can assign a high probability to an output that is subtly or catastrophically wrong. The probability reflects learned patterns in training data, not entailment from first principles. When a language model says it is confident, it means that similar token sequences have appeared frequently in similar contexts, not that the conclusion necessarily follows from the premises.

For many applications, this distinction does not matter. A 5% error rate in customer service chatbots is tolerable. A 5% error rate in autonomous vehicle trajectory planning is not. A 5% error rate in formal proofs of cryptographic protocols would undermine the entire purpose of the exercise.

The domains where AI creates the most value are increasingly those where probabilistic correctness is insufficient. Energy infrastructure, aerospace systems, medical diagnostics, and financial settlement are not markets that can absorb occasional hallucinations. They require outputs that are not just likely but provably correct.

The False Promise of Energy

This is where the thermodynamic framing, taken naively, is misleading.

Energy-based models assign scalar values to configurations: low energy indicates compatibility; high energy indicates tension or violation. This is a powerful representational primitive. Unlike autoregressive models, which must distribute probability mass across all possible continuations, EBMs can mark invalid configurations as high-energy without constructing a normalized distribution.

But low energy is not the same as logical validity.

An energy function encodes soft preferences learned from data. It captures statistical regularities, correlations, and patterns of co-occurrence. A configuration might have low energy because it resembles training examples, not because it satisfies formal axioms. The energy landscape is shaped by gradient descent on finite samples, not by logical inference over symbolic rules.

Consider a simple example: an energy-based model trained to evaluate mathematical proofs. After sufficient training, it might assign low energy to proofs that follow common patterns—induction arguments, contradiction setups, case analyses. It might identify that certain proof structures are more "proof-like" than others. But this is recognition, not verification. The model has learned what proofs tend to look like, not what makes a proof valid.

A genuine verifier does something categorically different. It takes a candidate proof and, step by step, checks that each inference follows from the axioms and previously established lemmas. The checking process is mechanical; it does not depend on having seen similar proofs before. A proof is valid because of its logical structure, not because it resembles other valid proofs in a training set.

This distinction—between learned compatibility and logical entailment—is the verification gap.

What Formal Verification Actually Requires

Formal verification is the process of establishing, through rigorous logical methods, that a system satisfies a specification. The key property is machine-checkability: the verification artifact must be interpretable by an algorithm that can confirm validity without human judgment.

The tools of formal verification include:

Proof assistants like Lean, Coq, and Isabelle provide interactive environments where humans (or machines) construct proofs that are checked at each step. A proof in Lean is not a natural language argument; it is a sequence of tactic applications that the Lean kernel validates against its foundational type theory. If the proof compiles, it is correct—by construction.

SMT solvers (Satisfiability Modulo Theories) determine whether logical formulas involving arithmetic, bit vectors, arrays, and other theories are satisfiable. They are the workhorses of industrial formal verification, used to verify hardware designs, security protocols, and safety-critical software.

Model checkers exhaustively explore the state space of finite systems to verify properties expressed in temporal logic. They can prove that a concurrent protocol never deadlocks, or that a hardware circuit never enters an invalid state.

What these tools have in common is that their outputs are mechanically verifiable. When an SMT solver returns "unsatisfiable," it can provide a proof certificate that an independent checker can validate. When a proof assistant accepts a theorem, the kernel has mechanically confirmed every inference step.

This is radically different from a neural network reporting high confidence.

The Asymmetry That Matters

There is a deep computational asymmetry at play here, one that grounds the entire enterprise of formal methods.

For many problems, checking a proposed solution is much easier than finding one. This asymmetry, formally captured by the P versus NP question, is the foundation of modern cryptography and the engine of formal verification. It is also key to understanding why neural-symbolic hybrid systems can be both powerful and trustworthy.

Consider theorem proving. Finding a proof of a novel mathematical conjecture might take years of human effort or days of neural network search. But checking that a proof is valid can be done in time proportional to the length of the proof by a simple mechanical process. The proof assistant's kernel is a small, well-understood program; Lean's core type checker is about 6,000 lines of well-audited code. The neural network that generated the proof candidate is a billion-parameter black box trained on terabytes of data.

This asymmetry has a precise mathematical formulation. The class NP contains problems where proposed solutions can be verified in polynomial time. The class P contains problems that can be solved in polynomial time. Whether P equals NP is famously unknown, but the practical reality is clear: for many problems we care about, such as scheduling, routing, satisfiability, and optimization, verification is much cheaper than finding a solution.

The PCP theorem takes this further. It establishes that for problems in NP, there are proof systems in which the verifier needs to examine only a constant number of randomly chosen bits to, with high probability, confirm that a proof is valid. Three bits. The proof might be enormous, encoding a complex computational history, but its validity can be probabilistically checked with a handful of spot-checks.

This asymmetry creates an opportunity: we can use unreliable, creative, probabilistic systems to generate candidates, then use reliable, mechanical, deterministic systems to filter them.

AlphaProof, published in Nature in November 2025, exemplifies this architecture. The system achieved silver medal performance at the 2024 International Mathematical Olympiad—the first AI to reach that level on formally verified solutions. The key phrase is "formally verified": AlphaProof generates proofs in Lean, a proof assistant that accepts only logically sound arguments. The proofs are not evaluated by human judges applying subjective standards. They are mechanically checked by a kernel that has been formally verified against its specification.

The technical details illuminate the pattern. AlphaProof combines an AlphaZero-style reinforcement learning framework with a pre-trained language model fine-tuned on mathematical text. The system performs a tree search over possible proof tactics, guided by a value network that estimates the probability of completing the proof and a policy network that proposes candidate next steps. For the hardest problems, AlphaProof employs "Test-Time RL" by generating millions of related problem variants and training on them during inference to adapt to the specific challenge.

All this machinery is probabilistic, heuristic, and potentially buggy. The value estimates are approximations. The policy network might suggest irrelevant tactics. The tree search might explore unpromising branches. But none of this matters for the correctness of the final output because every proof candidate passes through Lean's kernel.

The IMO problem P6, considered the hardest of the competition and solved by only 5 of 609 human participants, was solved by AlphaProof after extended computation. The proof the system found is not merely plausible—it is mechanically verified, step by step, to follow from the axioms. The creative, unreliable generator proposed; the simple, reliable checker verified.

This is the pattern. Generation is cheap. Verification is what creates trust.

The Neurosymbolic Bridge

The architecture emerging from this insight is neurosymbolic: neural systems for generation and search, symbolic systems for verification and constraint enforcement.

The neural component provides what symbolic systems lack: the ability to handle noise, generalize from examples, and operate in high-dimensional continuous spaces. Neural networks excel at pattern recognition, fuzzy matching, and creative recombination. They can look at a problem and propose approaches that would not occur to a pure search algorithm.

The symbolic component provides what neural systems lack: guarantees. A proof that compiles in Lean is correct. A specification that passes an SMT solver is satisfiable. A model that survives a bounded model checker has no bugs of the specified type in the explored state space.

The generate-and-verify loop combines these strengths:

- A neural system proposes candidate solutions—proofs, programs, designs, plans

- A symbolic system verifies whether the candidate meets the specification

- Failed verification provides a signal for the neural system to revise

- Iteration continues until verification succeeds or resources are exhausted

This is not a new idea. The generate-and-test paradigm has been central to AI since the field's inception. What is new is the scale at which neural generation can operate and the sophistication of the verification layer that can be used.

Energy Functions as Constraint Encodings

Where do energy-based models fit in this architecture?

The most promising role for EBMs is not as replacements for formal verification but as differentiable approximations to symbolic constraint checking. This allows gradient-based optimization to guide generation toward regions of the solution space likely to satisfy formal constraints.

Consider SATNet, a differentiable maximum satisfiability solver developed at CMU. SATNet encodes logical constraints as an energy function and uses gradient descent to find low-energy (constraint-satisfying) configurations. The crucial insight is that the energy function is derived from the logical structure of the constraints—it is not learned from data. The gradients point toward satisfying assignments because the energy function is designed to equate satisfaction with energy minimization.

This is a different use of energy than in a learned EBM. The energy function does not encode statistical regularities; it encodes logical structure. Low energy means constraint satisfaction, not pattern recognition.

Differentiable constraint solvers, such as SATNet, can be embedded into larger neural architectures, enabling end-to-end training of systems that combine learned perception with logical reasoning. A vision system might learn to recognize Sudoku digits from images, while a SATNet layer ensures the proposed solution satisfies Sudoku constraints. Perception is learned; constraint checking is structural.

This points toward a layered architecture where energy-based reasoning mediates between neural perception and symbolic verification:

- Perception layer: Neural networks process raw inputs (images, text, sensor data)

- Energy-based reasoning layer: Differentiable constraint solvers guide search toward constraint-satisfying regions

- Formal verification layer: Symbolic checkers provide hard guarantees on final outputs

The energy layer is not the final arbiter of correctness. It is a soft guide that makes the hard verification layer's job easier by proposing better candidates.

Domains Where Verification Enables Trust

The verification gap matters most in domains where trust cannot be delegated to statistical averaging.

Code synthesis for safety-critical systems. When an AI generates code for medical devices, avionics, or nuclear plant control systems, the code must be provably correct—not merely likely correct. The FDA, FAA, and NRC do not accept "95% confident" as a certification standard. Formal methods provide the artifacts required for regulatory approval: proofs that code satisfies specifications, bounded model-checking results demonstrating the absence of runtime errors, and test coverage metrics derived from formal coverage criteria.

Hardware design verification. A single bug in a CPU can cost billions of dollars (see Intel's Pentium FDIV bug). Hardware verification has been the greatest success story of formal methods in industry. Modern chips are accompanied by massive verification efforts that prove the correctness of arithmetic units, memory controllers, and cache coherence protocols. Neural systems that propose hardware designs must interface with this existing verification infrastructure.

Cryptographic protocol analysis. Security protocols are notoriously difficult to get right. Subtle bugs can lurk for years before adversaries discover them. Formal verification of cryptographic protocols—proving that no sequence of messages can violate secrecy or authentication properties—is the only reliable way to establish security. AI systems that help design protocols must produce outputs that can be fed into protocol verification tools.

Financial contract specification. Smart contracts and complex financial instruments encode legal obligations in executable form. Bugs in smart contracts have led to losses exceeding hundreds of millions of dollars. Formal specification and verification of financial contracts—proving that they behave as intended under all market conditions—is an emerging field where the verification gap is acutely felt.

In all these domains, the value of AI is not to replace formal verification but to accelerate the process of generating candidates that pass verification. The verification layer remains essential; what changes is the efficiency of the generation layer.

The Tradeoff: Coverage vs. Certainty

Formal methods have a fundamental limitation: they require formal specifications.

A proof assistant can verify that a function correctly implements a specification. But who verifies that the specification is correct? The specification is a human artifact that captures or fails to capture the informal intent behind the system. No amount of formal verification can close this gap; it is the province of validation, testing, and domain expertise.

This creates an inherent tradeoff:

- Formal verification provides certainty within narrow, formally specified domains

- Neural methods provide broad coverage across informally specified domains with statistical confidence

The question is how to combine them effectively.

One approach is to use formal methods for core invariants—properties that must hold in all cases—while accepting statistical assurance for auxiliary behaviors. A medical device might formally verify that it never delivers a lethal dose by using statistical testing to assess the quality of its user interface. The critical safety property gets the hard guarantee; the nice-to-have properties get the soft assurance.

Another approach is to use neural systems to generate formal specifications themselves. If a model can translate informal requirements into formal properties, the verification gap shifts upstream—from verifying code to verifying specifications. Early work on specification synthesis suggests this is possible, though the specifications produced still require human review.

The honest position is that formal verification is not a silver bullet. It solves one problem: confirming that a system meets its specification, while leaving others open. But the problem it solves is important enough to warrant serious investment, especially as AI systems take on roles previously reserved for highly accountable human professionals.

What Changes When Verification Scales

If the neurosymbolic pattern succeeds—if we build systems that efficiently generate candidates and reliably verify them—several things follow.

The liability landscape shifts. When an AI system can prove that its output is correct, the question of who is responsible for errors changes character. If the proof is valid, the error must lie in the specification or the verification tool—both of which are much smaller and more auditable than the neural generator. Formal proofs create clear boundaries of accountability.

Trust becomes compositional. A verified component can be trusted when integrated into a larger system. If module A is proven correct against specification S_A, and module B is proven correct against specification S_B, and the interface between them is formally specified, the combined system inherits guarantees from its components. This is how high-assurance systems are built in practice; AI-generated components can participate in this ecosystem if they are accompanied by proofs.

The skill profile of AI engineers changes. Building neurosymbolic systems requires expertise in both machine learning and formal methods—disciplines that have historically been quite separate. The engineers who can bridge this gap will be unusually valuable. The tools that make crossing the bridge easier will see wide adoption.

The bar for "AI-generated code" rises. Today, code synthesis systems are evaluated on whether their outputs compile and pass test suites. Tomorrow, the standard may be whether their outputs come with proofs. This is a harder problem, but it is the problem worth solving.

The Verification Gap

The verification gap tells us what remains to be built.

The Path Forward

Closing the verification gap requires progress on several fronts.

Autoformalization at scale. The bottleneck in systems like AlphaProof is translating informal mathematical statements into formal specifications. This autoformalization step currently requires significant manual effort or produces unreliable results. Advances in language models are beginning to change this. Recent work shows that LLMs can translate natural language mathematics into Lean statements with increasing accuracy. As autoformalization improves, the set of problems amenable to formal verification expands.

Richer specification languages. Current formal methods work best for well-defined properties: absence of runtime errors, conformance to type specifications, and bounded reachability. More complex properties, such as "the user interface is intuitive," "the recommendation is fair," and "the explanation is helpful," are difficult to formalize. Developing specification languages that can capture richer notions of correctness and verification techniques that can handle them remains an open research problem.

Efficient proof search. Even with neural guidance, formal proof search is computationally expensive. AlphaProof required days of computation for individual IMO problems. For verification to be practical in software development workflows, proof search must be orders of magnitude faster. This requires better neural heuristics, more efficient symbolic backends, and tighter integration between the two.

Verification-aware training. Current language models are trained on text prediction objectives that know nothing about formal correctness. Models trained with verification in the loop—using proof assistant feedback as a training signal—should generate candidates that are more likely to verify. Early work on RLVR (Reinforcement Learning with Verifiable Rewards) points in this direction, using formal checkers to provide a reward signal during training.

Incremental verification. Full formal verification of large systems is often impractical. Incremental approaches—verifying properties of changes rather than whole systems, using assume-guarantee reasoning to decompose verification tasks, caching and reusing proof artifacts—can make verification practical for systems of realistic scale.

The verification gap will not close overnight. But the trajectory is clear: as neural systems become more capable at generating candidates and as formal tools become more accessible and efficient, the combination becomes increasingly powerful. The question is not whether neurosymbolic verification will matter, but which organizations will build the expertise to deploy it effectively.

The thermodynamic turn is real. AI systems are evolving toward architectures that embed prediction into optimization, evaluate entire configurations rather than committing sequentially, and allocate compute dynamically based on problem difficulty.

But the thermodynamic metaphor, taken alone, is insufficient. Low energy is not logical validity. Learned compatibility is not formal entailment. The gap between energy minimization and genuine verification is where serious engineering remains.

The systems that matter in high-stakes domains will not be the ones that generate plausible outputs. They will be the ones that can prove their outputs are correct—or at minimum, prove that their outputs satisfy critical safety properties within formally specified bounds.

Generation is cheap. Verification is what creates trust.

The thermodynamic turn told us where the architecture is heading. The verification gap tells us what remains to be built.